This article describes my observations on network throughput and latency for network traffic between spokes belonging to different hub-spoke deployments in different Azure regions, via different implementation options.

Cross-region connectivity is typically required when hosting applications in active-active mode across multiple regions for high availability and resilience to regional failures. A couple of use-cases which I wanted to address are:

- Database replication traffic (e.g. Azure SQLMI replication for failover groups)

- Application traffic from one region (webapp) to primary database in another region.

Given below are some options (using the hub-spoke deployment) for enabling cross-region connectivity between 2 Azure Regions for the above use-cases (workloads hosted in spoke VNets). In these options, I've used VMs as source and destination Azure resources in the spoke VNets, as well as VMs (IP forwarding enabled) to simulate routers/NVAs in the hub VNets. In the real world, these hub VMs would be replaced by either Azure Firewall instances (expensive!) or 3rd-party NVAs (PAN-OS, Fortinet, etc.).

NOTE: For completeness, I've included route tables with User-Defined Routes (UDRs) for all options with the assumption that any traffic from on-premises to spoke VNets and vice versa must be steered through the hub routers/NVAs.

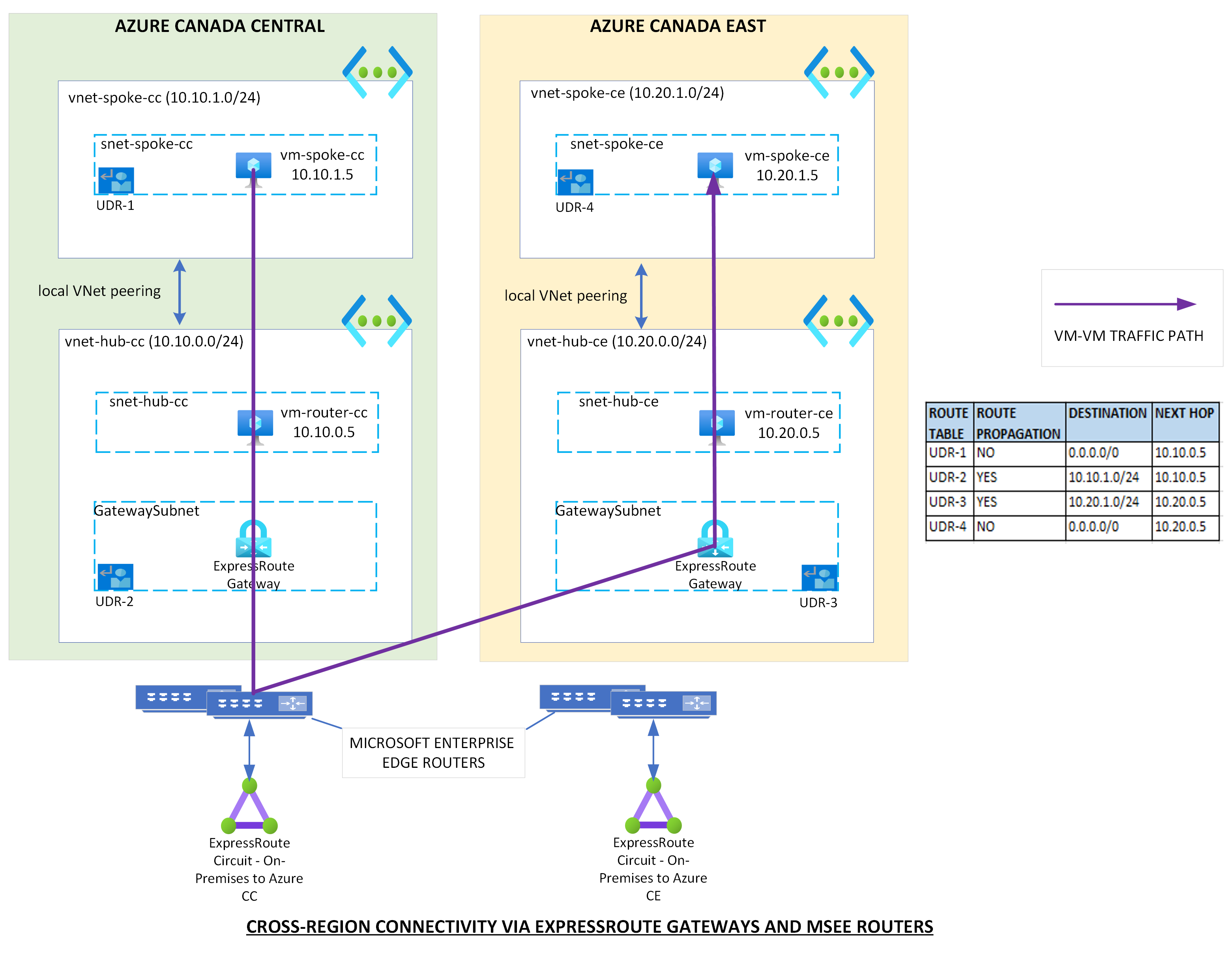

OPTION 1 : ExpressRoute Gateway

Pre-requisites:

- ExpressRoute is used for connectivity between on-premises and Azure

- The Hub VNets in both Azure regions are connected to the same ExpressRoute circuit.

Implementation:

If you meet the pre-requisites, then there's nothing further to do. Since both hub VNets are connected to the same ExpressRoute circuit, they're part of the same routing domain and so the spoke VMs can communicate with each other via their locally-peered Hubs and ExpressRoute gateways as shown in the image below, unless you're blocking traffic in the hub NVAs.

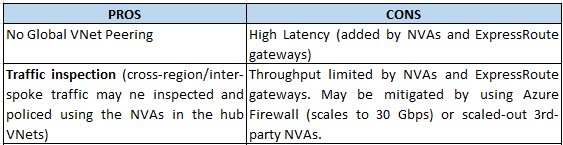

OPTION 2 : Hub-to-Hub Global VNet peering

Pre-requisites:

- Global VNet peering between the hub VNets

Implementation:

With Global VNet peering the hub VNets, you can steer traffic between your spoke VNets via the Hub-to-Hub peering with UDRs as shown in the image below.

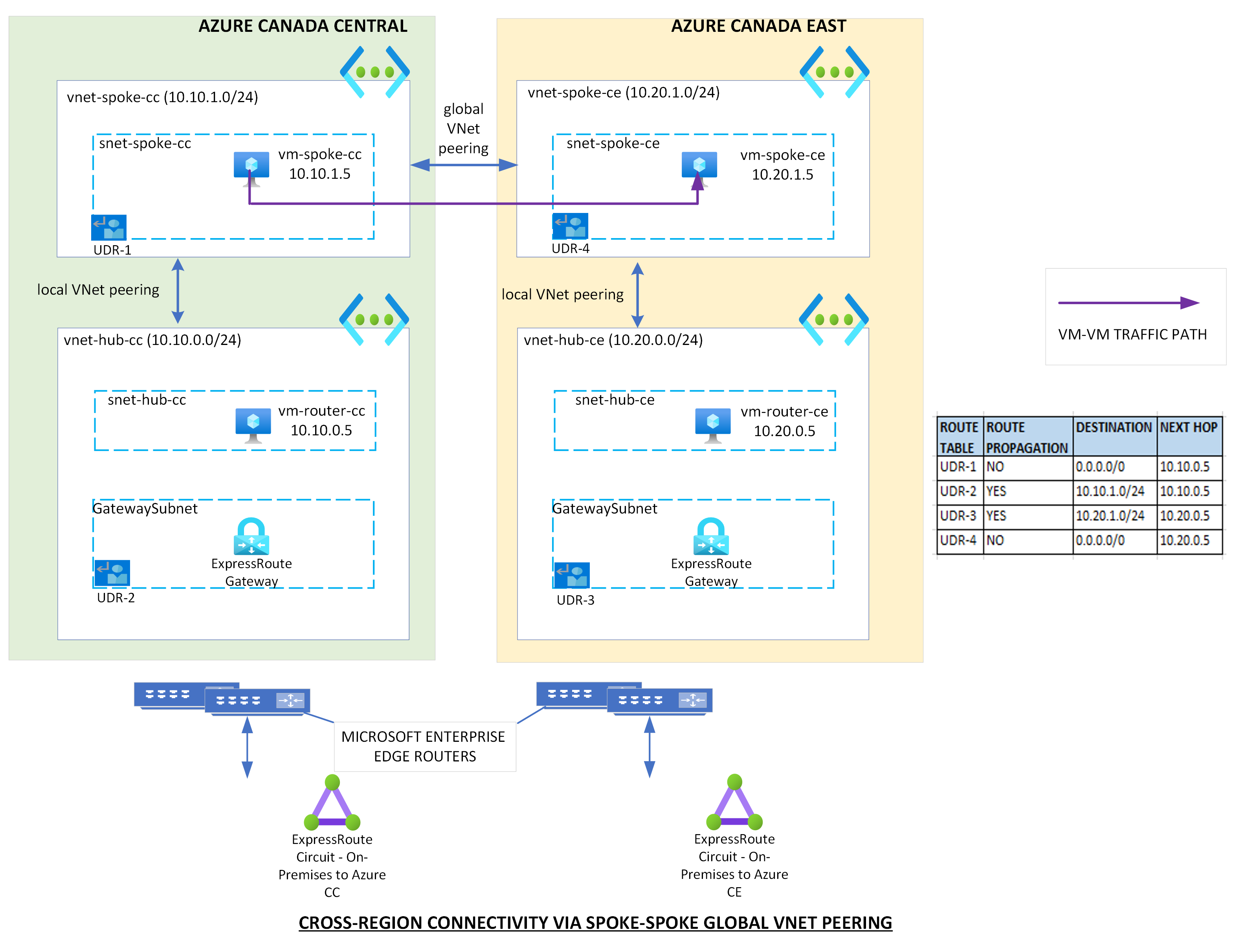

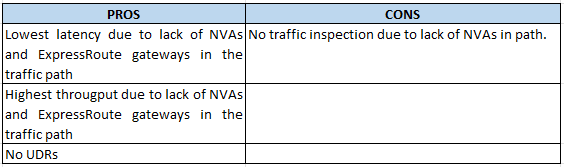

OPTION 3 : Spoke-to-Spoke Global VNet peering

Pre-requisites:

- Global VNet peering between the spoke VNets

Implementation:

With Global VNet peering the spoke VNets, traffic will be automatically steered between your spoke VNets via the Spoke-to-Spoke peering with the default routes (no UDRs) as shown in the image below.

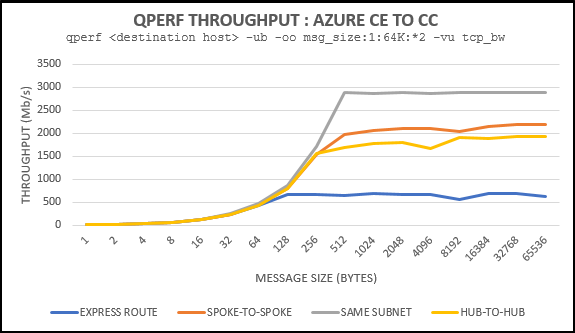

Network Performance Testing

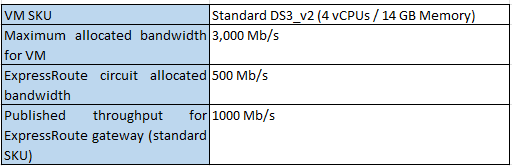

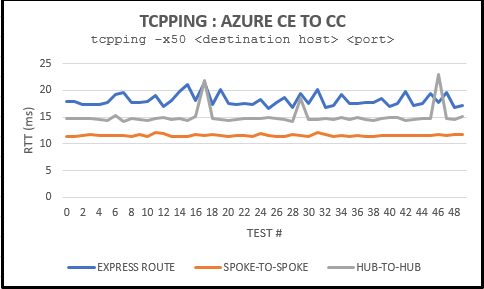

I used qperf and tcpping to test throughput and RTT (round-trip time) for connectivity between VMs in both spokes (10.10.1.5 to 10.20.1.5) for all 3 options. Given below are configuration specifications that have a bearing on the test results (throughput limitations):

An additional connectivity test for throughput was performed for both VMs in the same spoke subnet only to verify the network bandwidth allocation for the VM SKU DS3_v2 (verified!) and the results are depicted in the image below.

The throughput for the ExpressRoute path in the above image is limited by the bandwidth allocation for the ExpressRoute circuit (500 Mb/s). Increasing the bandwidth of the ExpressRoute circuit as well as changing the SKU of the ExpressRoute gateway to cater to higher throughput will likely be more expensive than any of the Global VNet peering options.

Irrespective of the bandwidth allocated to the ExpressRoute circuit or SKU selected for the ExpressRoute gateway, traffic via ExpressRoute (OPTION 1) will have the highest latency and so this option will not be appropriate for latency-sensitive traffic.

Based on the above observations, I would address the use-cases as below:

- Use spoke-to-spoke Global VNet peering (OPTION 3) for Azure SQLMI replication traffic. This is also a recommendation from Microsoft. A recommended way to do this is to allocate a separate VNet (spoke) for SQLMI in each region. Further, there is no benefit in inspecting this traffic.

- Use hub-to-hub Global VNet peering (Option 2) for application-to-database traffic so that you may inspect/police the traffic as well as have favorable latencies and throughput.

You may provide feedback on this article by clicking "Show comments" below and voting or adding comments.